After all the connector to Azure Data Lake is using OAuth2, so what’s the problem. I say restriction because it seems like madness. Well my friends, I recommend that we strongly petition Microsoft to lift this restriction.

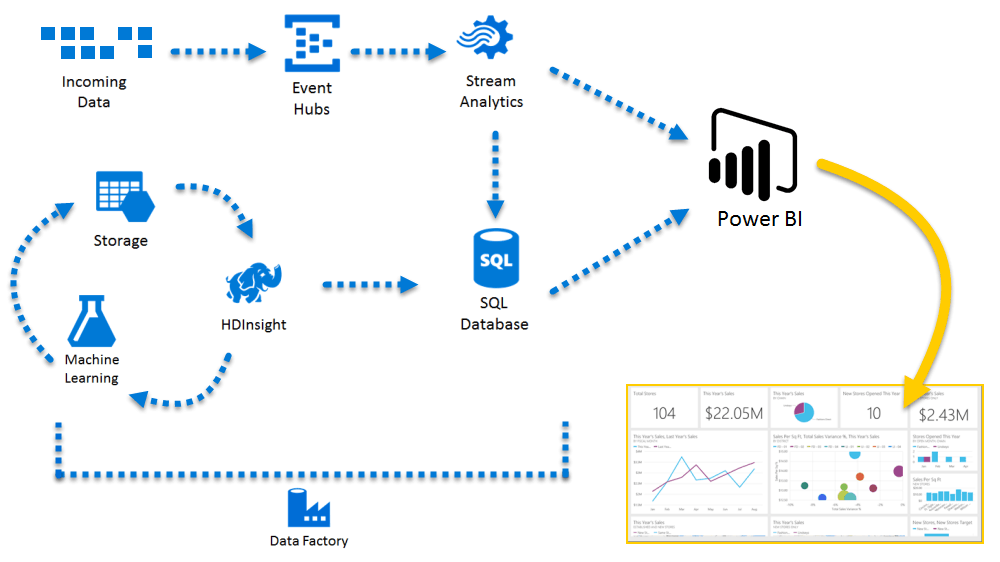

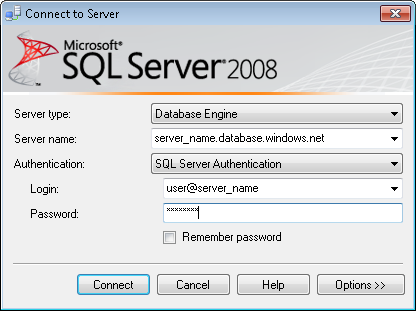

I’ve done it.Ī short-term work around is to refresh your datasets in the desktop app every day and republish new versions. Once these ducks are in line the credentials you supply to for the dataset refresh will be accepted. Then migrate your Data Lake Store to the new subscription. Unfortunately, the only long term thing you can do is setup a new Azure Subscription and make dam sure that it’s linked to your Office 365 office and thus residing in the same tenant. Once you’ve finished cursing, considering everything you’ve developed over the last 6 months in your Azure Subscription. You cannot currently authenticate against an Azure Data Lake Store from across tenants. In this case the disconnection means you will not be able to authenticate your datasets against your Azure Data Lake Store allowing for that very important scheduled data refresh.Ĭoming back to the title of this blog post: This may result in each group of services residing in different directory services or tenants. Problem OverviewĪs most systems and environments evolve its common (given the experience of several customer) to accidentally create a disconnection between your Azure Subscription and your Office 365 environments. A better error message would say invalid credentials for the tenant of the target data source. Now this error is also misleading because the problem is not invalid credentials on the face of it. This is want you’ll encounter.įailed to update data source credentials: The credentials provided for the DataLake source are invalid. If they aren’t and one little ducky has strayed from the pack/group/heard (what’s a collection of ducks?). By ducks I mean your Azure Subscription and Office 365 tenant. This expects several ducks to all be lined up exactly. Maybe.Īutomatic dataset refreshes, not so simple. Sharing, no problem at all, assuming you understand the latest Power BI Premium/Free apps, packs, workspace licencing stuff!… A post for another time. Next, you want to share and automatically refresh the data, meaning your audience have the latest data at the point of viewing, given a reasonable schedule. Armed with a valid Office 365/Power BI account the visuals, initial working data, model, measures and connection details for the data source get transferred to the web service version of Power BI, known as. In this scenario, we’ve developed our Power BI workbook in the desktop product and hit publish. This hopefully sets the scene for this posts and starts to allude to the problem your likely to encounter if you want to use your developed visuals beyond your own computer.

It doesn’t even matter about Personal vs Work/School accounts.

To reiterate, this means any Data Lake Store anywhere can be queried and datasets refreshed using local tools. Our local machines are of course external to the concept and context of a Microsoft Cloud tenant and directory. The connector as before can be supplied with any storage ADL:// URL and set of credentials using the desktop Power BI application. This is good news, but doesn’t make any difference to those of us that have already be storing our outputs as files in Data Lake Store. With the recent update to the Power BI desktop application we now find the Azure Data Lake Store connector has finally relinquished its ‘(Beta)’ status and is considered GA. Plus, I’ve included details of what you can currently do if you encounter it. But sadly, does exist and given its relative complexity I think warrants some explanation. Welcome readers, this is a post to define a problem that shouldn’t exist.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed